Author's Note: This article presents a speculative scenario based on current trends in AI development, automated copyright enforcement, and digital platform governance. It is a predictive analysis of a plausible future conflict, designed to explore systemic vulnerabilities before they manifest.

In the high-stakes arena of tech reveals, Nvidia is accustomed to making waves with announcements that redefine the boundaries of gaming graphics. In a hypothetical March 2026, the company could do just that, unveiling DLSS 5—a next-generation AI technology promising photorealistic lighting and material generation. Yet, within weeks, the conversation could spectacularly pivot from the future of rendering to a baffling administrative farce. The central question would become not about the technology's potential, but about how a global tech giant's official product video could be erased from YouTube by an Italian television station. This scenario, a bizarre collision of cutting-edge AI and archaic automated systems, exposes the fragile and often illogical underpinnings of digital copyright enforcement.

The DLSS 5 Reveal and the Sudden Blackout

In this speculative timeline, Nvidia’s announcement of DLSS 5 in March 2026 would be a significant moment for the gaming industry. The technology, positioned as the next leap in its Deep Learning Super Sampling suite, would tout AI-driven capabilities to generate photorealistic lighting and complex material textures in real-time, promising a new tier of visual fidelity for supported games.

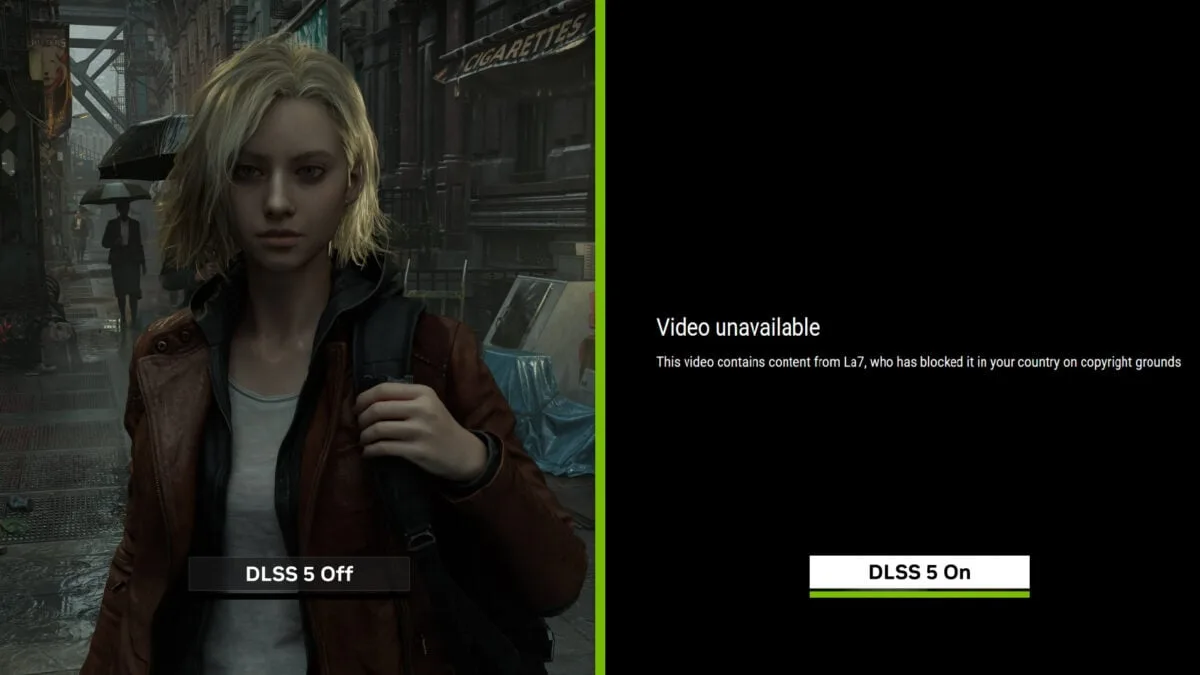

As standard practice, Nvidia would upload its official announcement trailer, titled “Announcing NVIDIA DLSS 5,” to its YouTube channel on March 16 or 17, 2026. It would serve as the primary source material for the global tech and gaming press. However, in early April 2026, viewers searching for the video could be met with a familiar but shocking sight: a blank space accompanied by a notice stating the video had been removed due to a copyright claim. The claimant would not be a competing tech firm or a game developer, but La7, an Italian national television broadcaster. The official source would be silenced by a seemingly unrelated third party, setting the stage for a cascade of confusion.

The Domino Effect: Creators Caught in the Crossfire

The fallout would extend far beyond Nvidia’s channel. The automated nature of YouTube’s enforcement system would mean that any video detected to contain the same DLSS 5 footage was vulnerable. Several prominent gaming-focused YouTube creators could find their coverage videos claimed, blocked, or taken down. Affected channels might include creators like Scrubing, Destin Legarie (DestinL), TheDezemBro, Last Stand Media, and Luke Stephens.

The mechanism of the error would be profoundly ironic. Reports would indicate that La7 had used Nvidia’s official DLSS 5 footage in its own programming, specifically during a segment on April 4, 2026. The broadcaster would then seemingly file copyright claims through YouTube’s Content ID system for that footage. The system, acting on this claim, would then scan YouTube and flag the original source material—Nvidia’s video uploaded weeks prior—as infringing.

The core failure was a lack of a basic chronological safeguard: YouTube’s automated system did not check upload dates, which would have instantly revealed that Nvidia’s video (March 16/17) predated La7’s claim and broadcast (April 4) by a significant margin.

YouTube's Automated Quagmire and the 30-Day Wait

Such an incident would ignite fierce criticism directed at YouTube’s automated Content ID system. Commentators and creators would highlight the absurdity of a system that could remove a primary source video based on a claim from a secondary broadcaster, all because it lacked fundamental logic checks. The failure would undermine trust in a system millions of creators rely upon, showing it could be weaponized—even accidentally—against the original rights holder.

In response to inquiries, TeamYouTube would likely provide clarity that only deepened concerns. They would confirm that Content ID claims are processed automatically. When a creator disputes a claim, they must wait for the claimant to respond, a process that can legally take up to 30 days. While YouTube notes that copyright owners who make repeated erroneous claims can have their Content ID access disabled, this policy offers little solace in a high-profile, urgent case. It would leave Nvidia and the affected creators in a bureaucratic limbo, with the official reveal of a flagship technology suppressed during its crucial announcement window.

A Perfect Storm: AI Controversy Meets Platform Dysfunction

This hypothetical copyright fiasco would not occur in a vacuum. The reveal of DLSS 5 itself would likely be met with notable online skepticism. A segment of gamers and commentators would criticize the AI-generated effects, with some derisively labeling the output as “AI slop” and expressing concern that such technology could alter the original artistic intent of game developers. Nvidia CEO Jensen Huang would likely defend the technology’s vision, as he has with past innovations.

The La7 takedown incident would act as a magnifying glass, focusing these broader cultural anxieties about AI-generated content and the reliability of platform governance into one clear, embarrassing example. It would create a perfect storm: cutting-edge, controversial AI technology rendered invisible by a blunt, error-prone automated system. The episode would starkly illustrate that the debate wasn’t just about the ethics of AI in art, but also about the precarious infrastructure that hosts and governs this digital content.

The strange case of Nvidia’s DLSS 5 video is more than a quirky tech glitch; it is a stark vulnerability report for the digital age. It demonstrates that from multinational corporations to individual creators, all are subject to the same opaque and sometimes irrational automated systems. As AI-generated and repurposed content becomes exponentially more prevalent, the questions this scenario raises grow only more urgent. If the system cannot protect the original creator in a clear-cut case with a documented timeline, what hope is there for more complex disputes? The incident leaves a lingering question for every stakeholder in the digital ecosystem: in a world governed by automated algorithms, who is really in control? The answer may depend on the industry's willingness to advocate for and implement critical reforms—such as mandatory algorithmic transparency, human-in-the-loop safeguards for high-profile claims, and updated copyright frameworks that account for the unique provenance of AI-generated media. Without these changes, the chaotic intersection of AI and automation will remain a critical point of failure for the entire digital content economy.

Comments

Join the Conversation

Share your thoughts, ask questions, and connect with other community members.

No comments yet

Be the first to share your thoughts!